NVIDIA AI Infrastructure Explained: How NVIDIA Is Powering the Global AI Revolution in 2026

- Shaikhmuizz javed

- 8 hours ago

- 15 min read

Muizz Shaikh|AI Researcher & Founder, FourFold AI | LinkedIn | fourfoldai.com | May 8, 2026 | 12 min read

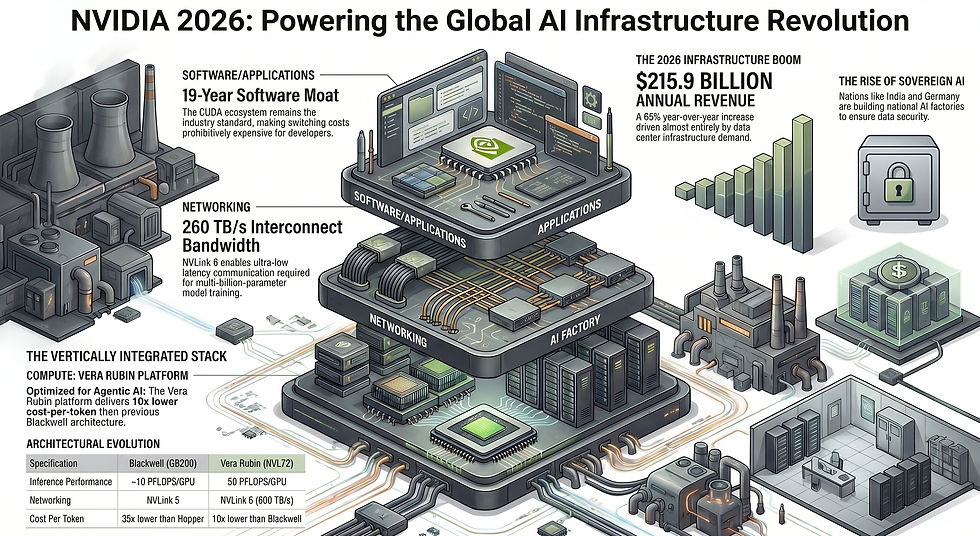

⚡ Quick Answer NVIDIA AI infrastructure in 2026 refers to the company's full-stack ecosystem — spanning GPUs (Blackwell, Vera Rubin), networking (NVLink, Spectrum-X), software (CUDA, AI Enterprise), and AI factory blueprints — that powers the global build-out of training clusters, inference factories, and sovereign AI clouds. NVIDIA's FY2026 revenue reached $215.9 billion, driven almost entirely by this infrastructure wave. [Source: NVIDIA FY2026 SEC Earnings Filing]

Introduction: Beyond the GPU Company Label

For most of the 2010s, NVIDIA was primarily known as the company that made graphics cards for gamers. That description aged quickly. By 2026, NVIDIA AI infrastructure is the backbone of the most significant capital expenditure cycle in the history of technology — a build-out that makes the dot-com era look modest in comparison.

Consider this: NVIDIA's FY2026 full-year revenue hit $215.9 billion — up 65% year-over-year — with data center revenue accounting for the overwhelming majority of that figure [Source: NVIDIA FY2026 Earnings, SEC Filing]. That is not a chip company's revenue profile. That is the profile of an industrial infrastructure provider.

What changed? NVIDIA systematically built — over two decades — a hardware-software-networking stack so deeply embedded in how AI research and deployment actually works that switching away from it costs more than it saves. And then, right as that moat was being stress-tested, the company shipped the Blackwell architecture and announced the Vera Rubin platform, extending its lead by a margin that most competitors will spend years chasing.

This article breaks down exactly how that infrastructure works, why it matters beyond the headline revenue numbers, and where things are headed through 2030.

$215.9B NVIDIA FY2026 Full-Year Revenue (+65% YoY)

$68.1B Q4 FY2026 Revenue Record (+73% YoY)

~80% AI Accelerator Market Share (Training)

$1T+ Blackwell & Vera Rubin Purchase Orders Through 2027

Defining NVIDIA AI Infrastructure: The Full-Stack Ecosystem

🎯 BLUF — Bottom Line Up Front

NVIDIA AI infrastructure is not just GPUs. It is a vertically integrated stack — compute (GPUs, CPUs), interconnects (NVLink, Spectrum-X), software (CUDA, cuDNN, AI Enterprise), and reference factory designs — that functions as a complete system. You cannot extract one layer without degrading the rest. That is both its power and its strategic lock-in.

The most useful analogy here is electricity. When a factory in 1920 decided to electrify its operations, it did not just buy a motor. It bought transformers, switchgear, wiring, protocols, and trained workers. The ecosystem around electricity was the real infrastructure. NVIDIA has engineered the same dynamic for AI compute.

The full-stack infrastructure breaks down as follows:

Compute layer: Blackwell and Vera Rubin GPUs, Grace/Vera CPUs, HBM memory

Interconnect layer: NVLink 6 (scale-up), Spectrum-X Ethernet and Quantum-X InfiniBand (scale-out)

Data processing: BlueField-4 DPUs, ConnectX-9 SuperNICs

Software layer: CUDA, cuDNN, NCCL, TensorRT-LLM, NeMo, AI Enterprise

Systems layer: DGX SuperPODs, NVL72 rack-scale systems, AI factory reference designs

Deployment layer: NVIDIA Cloud Partners, sovereign AI factory blueprints

Every layer is engineered to work best when the adjacent layers are also NVIDIA's. That is not an accident — it is the strategy.

The Three Pillars of NVIDIA's Dominance

🎯 BLUF

NVIDIA's competitive position rests on three interlocking pillars: the CUDA software moat (19+ years of developer investment), a proprietary networking stack (NVLink and Spectrum-X) that optimizes GPU-to-GPU communication, and hardware-software co-design that makes competing on individual components nearly impossible. Together, they create switching costs that exceed performance advantages for most buyers.

Pillar 1 — The CUDA Moat

CUDA is frequently described as NVIDIA's "software moat," but that framing undersells what it actually is. The moat is not CUDA itself — it is 19 years of accumulated ecosystem: cuDNN, cuBLAS, NCCL, PyTorch/TensorFlow optimizations, the Nsight toolchain, and millions of developers who have built their workflows around these libraries.

Every major ML framework — PyTorch, JAX, TensorFlow — is first optimized for CUDA. Custom kernels written by research labs at Meta, Google, and Microsoft are CUDA kernels. Graduate students entering AI research learn CUDA. Switching to a competing platform does not just mean buying different hardware; it means rebuilding years of software investment. For most organizations, that cost exceeds any performance advantage a competing chip might offer.

Pillar 2 — AI Networking (Spectrum-X and NVLink)

Modern AI training does not run on a single GPU — it runs across thousands of them, coordinated through high-speed interconnects. NVIDIA's Spectrum-X Ethernet and NVLink fabric handle this coordination, optimized for AI workloads in ways standard networking cannot match.

In the Vera Rubin NVL72 rack, NVLink 6 delivers 260 terabytes per second of connectivity between GPUs at low latency — the bandwidth required to run synchronous, multi-billion-parameter model training without communication bottlenecks .

Pillar 3 — Hardware-Software Lock-In

NVIDIA's TensorRT-LLM inference optimization stack makes NVIDIA GPUs perform significantly better for LLM inference than any baseline alternative. New model architectures are supported in TensorRT-LLM before they appear anywhere else. If you want to deploy the newest, fastest version of a frontier model at production scale, NVIDIA hardware is almost always the path of least resistance.

The AI Factory Revolution: Manufacturing Intelligence at Scale

🎯 BLUF

An AI factory is a purpose-built computing facility that "manufactures" tokens — the outputs of AI models — the same way a car plant manufactures vehicles. NVIDIA's AI factory reference designs specify exactly how compute, networking, storage, power, and cooling integrate to maximize tokens-per-watt and minimize cost-per-token at gigawatt scale.

The "AI factory" concept is one of the most important mental models in understanding the current infrastructure wave. Jensen Huang, NVIDIA's founder and CEO, has consistently used this framing: AI factories are industrial facilities that take in data and energy, and produce intelligence in the form of model tokens.

Training Factories vs. Inference Factories

These are meaningfully different workloads that require different infrastructure profiles:

Training factories are about raw throughput at scale — running massive parallel compute jobs for days or weeks. They prioritize GPU utilization, high-bandwidth interconnects, and storage I/O. A training factory might run a single job across 10,000+ GPUs simultaneously.

Inference factories are about latency, throughput, and cost-per-token at continuous operation. They serve millions of user requests in real time. The economics are entirely different: you need to minimize idle compute while maintaining response times measured in milliseconds.

NVIDIA's Vera Rubin platform was specifically engineered to close the gap between these two modes. The NVL72 achieves up to 10x higher inference throughput per watt at one-tenth the cost per token compared to Hopper-era hardware — which is the metric that determines whether deploying AI agents at scale is economically viable [Source: NVIDIA Vera Rubin Newsroom, March 2026].

🖥️ Rack-Scale Compute Flow — Vera Rubin NVL72

User Request / Training Job→ConnectX-9 SuperNIC→BlueField-4 DPU→Vera CPU (36 units)→NVLink 6 Fabric (260 TB/s)→Rubin GPU (72 units · 3.6 exaFLOPS)→HBM4 Memory (20.7 TB · 1.6 PB/s)→Token Output / Model Update

Every component in that flow was designed by NVIDIA — or to NVIDIA's specifications — specifically to eliminate bottlenecks at each handoff. The rack itself is cable-free with modular tray designs enabling 18x faster assembly and serviceability versus Blackwell [Source: NVIDIA Rubin Platform Newsroom]. At the scale of building hundreds of racks per month, that operational detail is genuinely significant.

Architectural Evolution: From Blackwell to Vera Rubin

🎯 BLUF

The shift from Hopper to Blackwell delivered roughly 30x performance improvement for AI workloads. Moving from Blackwell to Vera Rubin adds another 5x inference throughput gain and 10x cost-per-token reduction. Vera Rubin is the first NVIDIA platform designed from the ground up for agentic AI — where multiple AI models coordinate in real time to complete multi-step tasks.

Specification | Hopper (H100) | Blackwell (GB200) | Vera Rubin (NVL72) |

Inference Performance (NVFP4) | ~10 PFLOPS | ~10 PFLOPS/GPU | 50 PFLOPS/GPU · 3.6 exaFLOPS/rack |

Memory Bandwidth | 3.35 TB/s (HBM3) | 8 TB/s (HBM3e) | 1.6 PB/s rack-level (HBM4) |

NVLink Generation | NVLink 4 | NVLink 5 | NVLink 6 (260 TB/s) |

Cost Per Token vs. Hopper | Baseline | ~35x lower (agentic AI) | ~10x lower than Blackwell |

MoE Training Efficiency | Baseline | Baseline GPU count | 4x fewer GPUs needed |

Transistor Count | 80B | 208B | 336B (dual-die, TSMC 3nm) |

Assembly Speed | Standard | Standard | 18x faster (cable-free modular) |

Confidential Computing | GPU-level | GPU-level | Full rack-scale (CPU + GPU + NVLink) |

The Vera Rubin platform's extreme co-design philosophy is worth dwelling on. NVIDIA did not design a faster GPU and then figure out the rest. It designed six chip types simultaneously — the Vera CPU, Rubin GPU, NVLink 6 Switch, ConnectX-9 SuperNIC, BlueField-4 DPU, and Spectrum-6 Ethernet switch — treating the entire NVL72 rack as a single compute unit [Source: Tom's Hardware, Jan 2026].

Powering Agentic AI: Why Inference Scale Is the New Frontier

🎯 BLUF

Agentic AI — where models autonomously plan, execute, and iterate across multi-step tasks — generates dramatically more inference compute demand than simple question-answering. A single agentic workflow can require dozens of model calls. At production scale across millions of users, this creates a structural demand explosion for real-time inference infrastructure that Blackwell and Vera Rubin are specifically engineered to address.

The shift from models that answer questions to models that do things changes the infrastructure calculus entirely. When a user asks a chatbot a single question, the system makes one inference call. When an agentic AI system plans a research project, writes and tests code, browses the web for sources, and compiles a report — it might make 50 to 200 inference calls in a single session.

Multiply that across millions of enterprise users, and the inference compute requirement becomes something closer to industrial utility-scale power generation. This is precisely why NVIDIA's emphasis on tokens per watt and cost per token is not marketing language — it is the fundamental economic variable that determines whether agentic AI is deployable at scale.

Blackwell Ultra delivers up to 50x better performance and 35x lower cost for agentic AI compared to Hopper [Source: SemiAnalysis InferenceX benchmark, cited in NVIDIA FY2026 8-K]. Vera Rubin extends that by another order of magnitude — making mass-market agentic AI not just technically possible, but economically rational.

Global Shifts: The Rise of Sovereign AI

🎯 BLUF

Sovereign AI is the concept of nations building and controlling their own AI infrastructure — training models on local data, in local languages, within national regulatory boundaries. In 2025–2026, countries from India to Germany to Indonesia are partnering with NVIDIA to build national AI factories. This is about economic competitiveness and data security at a geopolitical scale.

The sovereign AI trend is one of the most structurally significant — and least discussed — dimensions of NVIDIA's growth. It represents a category of demand that no software-only competitor can address, because it is fundamentally about physical infrastructure deployed within national borders.

India's AI Mission: A Case Study in Scale

India's IndiaAI Mission has committed over $1.2 billion in federal funding to build out national AI compute capacity [Source: India AI Impact Summit 2026, NVIDIA Blog, Feb 2026]. The targets are substantial:

Yotta Data Services is deploying over 20,000 NVIDIA Blackwell Ultra GPUs via its Shakti Cloud, with campuses in Navi Mumbai and Greater Noida [Source: NVIDIA India AI Mission Blog]

Larsen & Toubro (L&T) and NVIDIA announced a gigawatt-scale AI factory venture, with expansions to 30MW in Chennai and a 40MW facility in Mumbai [Source: India AI Impact Summit 2026]

India is on track to cross 100,000 GPUs by end of 2026 — tripling its capacity in under a year [Source: Union IT Minister Ashwini Vaishnaw]

"AI is driving the largest infrastructure buildout in human history — everyone will use it, every company will be powered by it, and every country will build it."— Jensen Huang, Founder & CEO, NVIDIA (India AI Impact Summit 2026)

Europe's Sovereign Layer

Deutsche Telekom and NVIDIA launched the world's first Industrial AI Cloud — a sovereign, enterprise-grade platform built on NVIDIA infrastructure within Germany. This facility serves as the blueprint for European sovereign AI: GPU-dense, regulatory-compliant, and capable of running agentic models and physical AI for manufacturing, automotive, and robotics [Source: NVIDIA National Transformation Page].

Telecom operators across Europe — Orange, Swisscom, Telefónica, Telenor — are following the same playbook: deploying NVIDIA AI factory infrastructure as sovereign national platforms, then offering GPU-as-a-service to enterprises and governments within their regulatory jurisdictions.

Enterprise Adoption: Co-Engineering at Hyperscale

🎯 BLUF

Meta, Google, Microsoft, and AWS are not simply purchasing NVIDIA hardware — they are co-engineering infrastructure with NVIDIA, integrating Blackwell and Vera Rubin into their own cloud architectures. Microsoft was the first hyperscaler to power up Vera Rubin NVL72 systems. AWS is deploying over 1 million NVIDIA GPUs across its global cloud regions beginning in 2026.

Microsoft Azure: Deployed hundreds of thousands of liquid-cooled Grace Blackwell GPUs globally. Azure was the first hyperscale cloud to power up Vera Rubin NVL72 systems, with global rollout continuing through 2026. Vera Rubin will also power Microsoft's next-generation Fairwater AI superfactory sites [Source: NVIDIA GTC 2026 Blog, March 2026].

AWS: Deploying over 1 million NVIDIA GPUs across global cloud regions, beginning in 2026. AWS and NVIDIA are collaborating on Spectrum networking and full AI factory architecture — designed to operate as unified compute engines for training and deploying next-generation AI systems [Source: NVIDIA GTC 2026 Blog].

Google Cloud: Plans to be among the first cloud providers to offer Vera Rubin NVL72 rack-scale systems in H2 2026, integrated into their AI Hypercomputer architecture [Source: Google Cloud Blog, March 2026].

Meta: Part of a $27 billion infrastructure deal with Nebius Group that includes $12 billion in dedicated Vera Rubin capacity [Source: Tech Insider, NVIDIA Rubin GPU Analysis].

Challenges and the Competitive Landscape

🎯 BLUF

NVIDIA faces real challenges: energy consumption at gigawatt scale, US export restrictions limiting access to high-growth markets, and rising custom silicon from Google (TPUs), Amazon (Trainium), and others. The nuanced reality is that NVIDIA's training moat remains robust, while its inference market share faces structural pressure from purpose-built ASICs optimized for specific workloads.

Energy: The Gigawatt Problem

A single Vera Rubin NVL72 rack operates at high power density. At the scale of an AI factory — thousands of racks — this translates to megawatt-scale power requirements per facility. L&T's planned gigawatt-scale AI factory in India is not using the word "gigawatt" for effect; it reflects the actual power infrastructure required to run production-grade AI compute at national scale. The companies building AI factories are increasingly in the business of energy infrastructure, not just computing.

Export Restrictions

US export control regulations have materially limited NVIDIA's ability to sell its highest-performance chips in certain markets, most significantly China. This created an opening for domestic Chinese alternatives — most notably Huawei's Ascend series — to capture market share that NVIDIA cannot legally serve. The long-term strategic impact of this remains one of the most significant uncertainties in the AI hardware market through 2030.

Custom Silicon: The Inference Pressure

The most credible competitive challenge comes from purpose-built inference chips. Google's TPU v7 reportedly delivers 67% better energy efficiency for reasoning model inference, and Google's TPU ecosystem accounts for over 52% of global AI server ASIC volume [Source: FinancialContent/TokenRing Analysis, Jan 2026].

The nuanced read: NVIDIA's training moat remains largely intact through 2028. Custom ASICs win on efficiency for specific, predictable inference workloads — but training large foundation models still demands the flexibility and programmability that GPUs and CUDA provide [Source: Introl Custom Silicon Inflection Report, Feb 2026].

Challenger | Product | Strength | Limitation vs. NVIDIA |

TPU v7 | Inference efficiency, JAX ecosystem | Captive to Google Cloud; no open CUDA equivalent | |

Amazon | Trainium 2 | AWS-native training cost | Limited third-party software ecosystem |

AMD | MI300X / MI400 | HBM memory capacity, price | Software maturity gap vs. CUDA |

Huawei | Ascend 910C | China market dominance | Limited global reach; export-restricted market |

Groq | LPU (Groq 3) | Ultra-low latency inference | Limited flexibility for training workloads |

Future Prediction: AI Infrastructure as the New Oil Pipeline

🎯 BLUF

Between 2027 and 2030, AI infrastructure will evolve from data center-centric computing to a distributed intelligence grid — spanning cloud, edge, orbit, and embedded systems. NVIDIA is positioning for every layer of this grid through Physical AI, robotics platforms, and Space-1, its orbital computing initiative. The organizations that control this infrastructure layer will hold a structural economic advantage analogous to oil pipeline operators in the 20th century.

Oil pipelines were not just pipes. They were the physical layer that determined which refineries could reach which markets, which cities could industrialize, and which nations could project economic power. The companies that built and owned that infrastructure captured value for generations — not because they were the best chemists, but because they controlled the flow of the resource.

AI infrastructure in 2026 has the same structural character. The organizations building AI factories today — at national scale, with gigawatt power contracts, liquid cooling systems, and proprietary interconnects — are not just running data centers. They are building the pipelines through which the next decade of economic value will flow.

Physical AI and Robotics (2027–2028)

NVIDIA's Omniverse platform and physical AI stack are the foundation for the next wave: AI systems that operate in the physical world. Factory-scale digital twins, AI-powered robotics coordination, and autonomous vehicle inference all require persistent, low-latency compute deployed close to the physical systems they manage. This creates demand for edge AI factories — smaller-scale inference clusters deployed inside manufacturing facilities, logistics hubs, and transportation networks.

Orbital Computing (2028+)

NVIDIA's Space-1 Vera Rubin initiative — designing AI data centers for orbital deployment — sounds audacious until you consider the latency requirements of global AI inference. Compute in low-Earth orbit means any location on Earth can reach a compute node within milliseconds, regardless of terrestrial infrastructure. This is a 2028–2030 horizon, but NVIDIA is engineering for it today [Source: NVIDIA GTC 2026 Blog].

The AI Grid

The end state of all this infrastructure build-out is what NVIDIA calls the AI grid — a unified, orchestrated system linking sovereign AI factories, regional inference hubs, edge sites, and eventually orbital compute into a single addressable infrastructure layer. AI applications will route workloads to the optimal location for latency, cost, and regulatory compliance automatically. That vision is the logical extension of everything NVIDIA is building in 2026.

Frequently Asked Questions

Is NVIDIA more than a GPU company?

Yes — significantly. NVIDIA is a full-stack AI infrastructure company. Its revenue of $215.9 billion in FY2026 came primarily from data center systems, networking (NVLink, Spectrum-X), software (CUDA, AI Enterprise), and AI factory designs — not consumer graphics. GPUs are the compute engine, but the broader ecosystem is what generates durable competitive advantage.

Why are AI factories important?

AI factories are purpose-built computing facilities that manufacture intelligence — producing AI model outputs (tokens) at scale the way industrial plants produce goods. They matter because the volume and cost of token generation determines whether AI applications are economically viable at scale. NVIDIA's AI factory reference designs maximize tokens-per-watt and minimize cost-per-token.

What is Sovereign AI?

Sovereign AI is a nation's ability to build, own, and operate its own AI infrastructure — training models on domestic data, in local languages, within national regulatory boundaries. Countries like India, Germany, and Indonesia are building NVIDIA-powered sovereign AI clouds to maintain data residency, national security, and economic competitiveness in AI without depending entirely on foreign cloud providers.

Will custom chips replace NVIDIA?

Not entirely, and not soon. Custom ASICs (Google TPUs, Amazon Trainium) are winning inference market share for specific, predictable workloads where efficiency matters more than flexibility. But NVIDIA's training dominance — backed by 19+ years of CUDA ecosystem investment — remains largely intact through at least 2028. The most likely outcome is a multi-vendor inference market alongside continued NVIDIA training dominance.

What is the Vera Rubin platform and why does it matter?

Vera Rubin is NVIDIA's next-generation AI infrastructure platform, comprising six co-designed chips unified in the NVL72 rack-scale system. It delivers up to 10x lower cost-per-token than Blackwell and is specifically engineered for agentic AI workloads. Volume production is ramping in H2 2026, with Microsoft Azure and Google Cloud among the first deployers.

What is CUDA and why is it so hard to replace?

CUDA is NVIDIA's parallel computing platform, first released in 2006. The barrier to replacing it is not the language itself but the 19+ years of accumulated libraries, tools, framework optimizations, and developer expertise built on top of it. Every major AI framework is first optimized for CUDA. Switching costs — in engineering time and performance risk — exceed the benefits for most organizations.

How does NVLink differ from standard networking?

Standard Ethernet introduces latency and bandwidth limitations that bottleneck multi-thousand-GPU training jobs. NVLink is a proprietary, high-bandwidth, low-latency interconnect designed specifically for GPU-to-GPU communication. NVLink 6 in Vera Rubin delivers 260 TB/s within a rack — roughly 20x the bandwidth of high-speed Ethernet at a fraction of the latency.

What is NVIDIA's revenue from AI infrastructure in 2026?

NVIDIA reported FY2026 full-year revenue of $215.9 billion, up 65% year-over-year, with record Q4 revenue of $68.1 billion (up 73% YoY). Data center revenue — driven by Blackwell GPU systems, networking, and AI software — comprised the overwhelming majority, reflecting NVIDIA's transformation from chip vendor to full-stack infrastructure company.

How is agentic AI driving demand for new infrastructure?

Agentic AI systems — which plan and execute autonomously across multi-step tasks — generate 50–200x more inference compute per user session than simple chatbot interactions. At production scale across millions of enterprise users, this creates structural demand for real-time, low-latency inference clusters. Blackwell Ultra delivers 35x lower cost for agentic AI vs. Hopper; Vera Rubin extends this with 10x better throughput-per-watt.

What is the DGX SuperPOD?

The DGX SuperPOD is NVIDIA's reference architecture for large-scale AI training clusters — a pre-validated, turnkey system combining DGX compute nodes, NVLink networking, InfiniBand fabric, and storage. It allows enterprises and cloud providers to deploy production-scale AI training infrastructure without custom engineering, reducing time-to-deployment while ensuring NVIDIA-validated performance.

References & Further Reading

This article is backed by authoritative sources and research. All statistics, performance figures, and technical specifications cited above are drawn directly from primary sources listed below.

NVIDIA Corporation — FY2026 Q4 Earnings Press Release (SEC 8-K Filing, Feb 25, 2026)

https://www.sec.gov/cgi-bin/browse-edgar?action=getcompany&CIK=nvda

NVIDIA Technical Blog — Inside the NVIDIA Vera Rubin Platform: Six New Chips, One AI Supercomputer (Jan 5, 2026)

NVIDIA Newsroom — NVIDIA Vera Rubin Opens Agentic AI Frontier (March 16, 2026)

NVIDIA Newsroom — NVIDIA Kicks Off the Next Generation of AI With Rubin

NVIDIA Blog — NVIDIA GTC 2026 Live Updates (March 2026)

NVIDIA Blog — India Fuels Its AI Mission With NVIDIA (Feb 18, 2026)

blogs.nvidia.com/blog/india-ai-mission-infrastructure-models

Google Cloud Blog — Google Cloud AI Infrastructure at NVIDIA GTC 2026 (March 16, 2026)

cloud.google.com/blog/products/compute/google-cloud-ai-infrastructure-at-nvidia-gtc-2026

Tom's Hardware — NVIDIA Launches Vera Rubin NVL72 AI Supercomputer at CES (Jan 5, 2026)

Introl Research — NVIDIA's Unassailable Position: CUDA Moat Analysis (Jan 22, 2026)

Introl Research — Custom Silicon Inflection 2026 (Feb 23, 2026)

FourFold AI — AI Research, Trends & Infrastructure Insights

NVIDIA — Sovereign AI National Transformation Page

NVIDIA — Vera Rubin Platform Product Page

ETV Bharat — India AI Impact Summit 2026: L&T, NVIDIA Announce Sovereign AI Factory Venture (Feb 18, 2026)

NVIDIA — Sovereign AI National Transformation Page

FinancialContent / TokenRing — The Great Decoupling: How Cloud Giants Are Building Custom 3nm Silicon (Jan 29, 2026)

Disclaimer:

This article is intended for informational and educational purposes only. The views expressed represent the author's analysis based on publicly available data and cited sources at the time of publication. Performance figures, financial data, and market projections are sourced from third-party research and NVIDIA's official communications; they are subject to change. This article does not constitute investment, financial, or legal advice. For full terms, please review the FourFold AI Disclaimer.

© 2026 FourFold AI · fourfoldai.com

.png)

Comments